AI Engineering / Security

Shadow AI: The Invisible Risk in Modern Engineering Teams

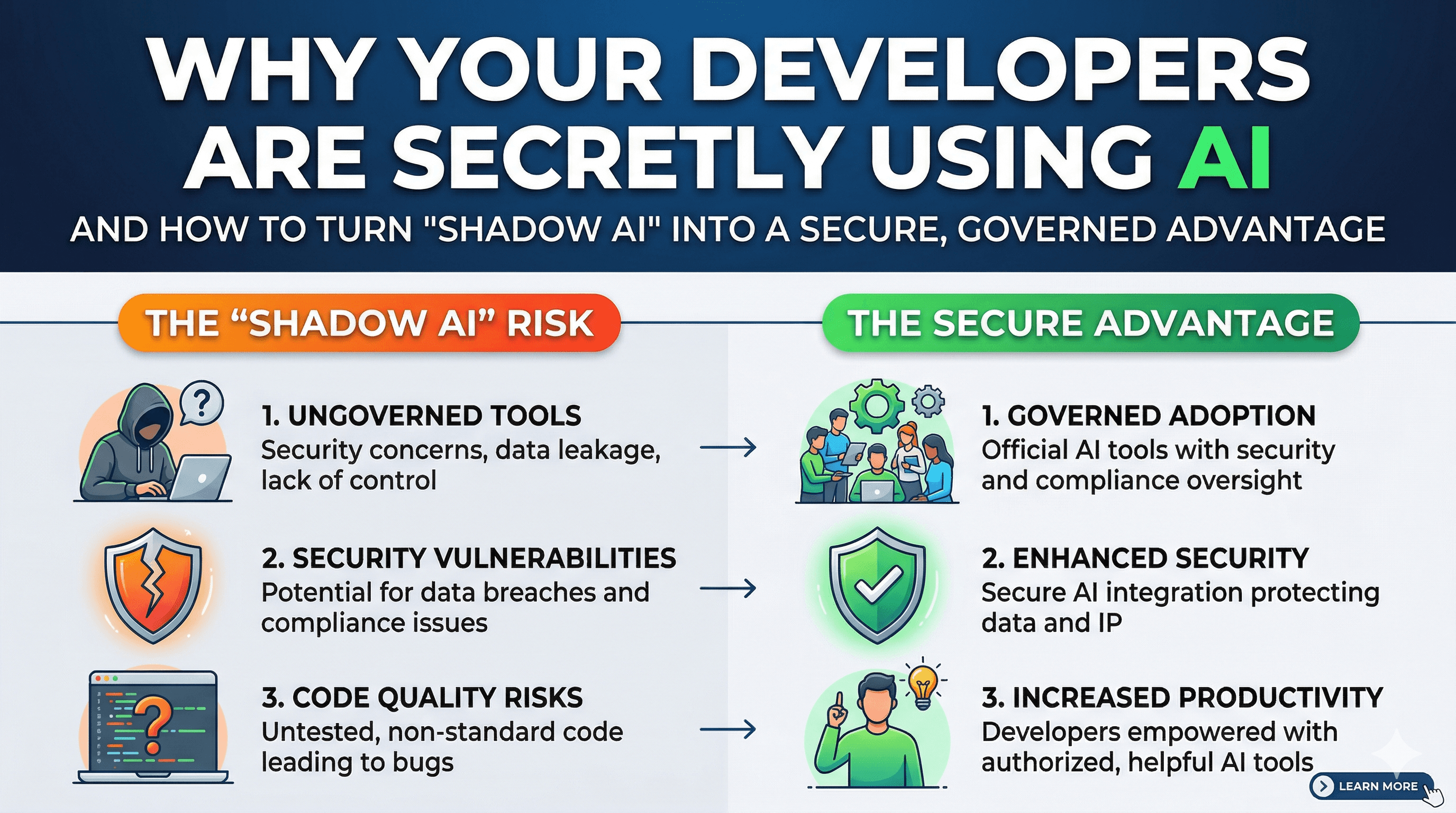

Why your developers are secretly using AI, and how to turn 'Shadow AI' into a secure, governed advantage.

Written by

Codehouse Author

Developers are under more pressure than ever. Whether it's meeting tight sprint deadlines or modernizing complex legacy systems, the allure of a 10x productivity boost is irresistible. This has led to the explosion of Shadow AI—the use of unauthorized or unvetted AI tools within the development lifecycle. While the intentions are almost always positive, the lack of organizational oversight creates a "Wild West" environment where sensitive data flows freely into public models without a safety net.

As we discussed in our guide on the AI Agent Orchestrator, the goal for a senior engineer in 2026 isn't to stop AI usage, but to professionalize it. When a developer pastes a proprietary algorithm into a public web chat to "fix a bug" or "optimize for performance," they are effectively leaking intellectual property. This is the core tension of Shadow AI: it provides immediate local value to the individual while creating long-term systemic risk for the enterprise.

The Rise of Shadow AI in Engineering

Why do developers turn to Shadow AI? The answer is simple: friction. If the official company-approved AI tool is outdated, slow, or overly restrictive, engineers will find a way around it. They use personal accounts for ChatGPT, Claude, or Midjourney to generate code, documentation, and even architectural diagrams. This "underground" usage means that the organization loses visibility into what code is AI-generated and, more importantly, what data has been shared with third-party providers.

In our exploration of AI Automation for Daily Tasks, we emphasized that automation should be a team-wide asset. When AI is used in the shadows, the "Golden Prompts" and specialized workflows remain trapped in individual silos. This fragmentation prevents the team from building a collective intelligence that scales beyond a single developer's keyboard.

The Hidden Dangers of Ungoverned AI

The risks of Shadow AI go far beyond simple data leaks. In a production environment, reliability and security are non-negotiable. When AI-generated code is introduced without proper vetting or context, it often carries "Ghost Dependencies"—libraries, APIs, or architectural patterns that the AI "hallucinated" or that carry restrictive licenses. Here are the primary dangers we see in the field today:

PII and Secret Leaks: Developers accidentally pasting logs containing real user data or hardcoded API keys into public LLMs for debugging purposes.

Hallucinated Security: AI suggesting deprecated cryptographic methods or insecure configuration patterns that look correct but fail under a penetration test.

Licensing Contamination: AI-generated code that mimics GPL-licensed software, potentially tainting a commercial codebase and creating legal liabilities.

Lack of Reproducibility: Code that works once but is based on a non-deterministic prompt that the rest of the team cannot replicate or maintain.

From "Ban" to "Enable": The Senior Architect's Role

A Senior Architect's job is to build guardrails, not walls. History has shown that banning "Shadow IT" (and now Shadow AI) simply drives the behavior further underground. Instead, the focus should be on establishing a "Governed AI Workbench." This involves moving away from consumer-grade web interfaces and toward enterprise-grade API integrations where data is explicitly not used for training the provider's models.

Modern engineering teams are increasingly turning to local LLM deployments. By using tools like Ollama or dedicated VPC-hosted instances of Llama 3 or Mistral, you can give your team the power of AI while keeping the data 100% within your infrastructure. This approach aligns perfectly with the "Privacy-First" mindset we teach in our Advanced Node.js and .NET Architecture courses, where system boundaries are strictly enforced.

The Rule of 4: Shadow AI Scenarios

To identify if your team is currently operating in the "Shadows," look for these four distinct scenarios that signal a need for better AI governance:

The "Secret" Refactor: A developer refactors a 1,000-line legacy method over a weekend using an unapproved browser extension, introducing logic that no one else on the team understands.

The "Anonymous" Debugger: Using a public AI to analyze production stack traces that contain real customer names, emails, or transaction IDs because the local logs are "too hard to read."

The "Bypassed" Configuration: Asking an AI how to bypass a local security policy or corporate firewall rule because "it's slowing down the build process," inadvertently creating a security hole.

The "Shadow" Procurement: Entire sub-teams subscribing to third-party AI "copilots" on personal credit cards to avoid the six-month enterprise procurement cycle.

Building an AI-First Engineering Culture

The ultimate cure for Shadow AI is transparency and education. When developers understand the why behind security policies, they are far more likely to collaborate on official solutions. Providing a centralized "Prompt Library" and a "Model Switchboard" allows the organization to monitor usage, optimize costs, and ensure that the most capable model is being used for the right task.

If you want to lead this transition in your organization, you must master the underlying infrastructure that makes governed AI possible. Our Linux Mastery course provides the essential foundation for hosting your own local AI models, managing GPU resources, and securing the shell environments where professional orchestration happens.

For more information on the latest standards in AI safety, refer to the CISA AI Security Best Practices. Moving AI from the shadows into the light is not just a security requirement—it is a competitive necessity in the modern engineering landscape.

Developers are under more pressure than ever. Whether it's meeting tight sprint deadlines or modernizing complex legacy systems, the allure of a 10x productivity boost is irresistible. This has led to the explosion of Shadow AI—the use of unauthorized or unvetted AI tools within the development lifecycle. While the intentions are almost always positive, the lack of organizational oversight creates a "Wild West" environment where sensitive data flows freely into public models without a safety net.

As we discussed in our guide on the AI Agent Orchestrator, the goal for a senior engineer in 2026 isn't to stop AI usage, but to professionalize it. When a developer pastes a proprietary algorithm into a public web chat to "fix a bug" or "optimize for performance," they are effectively leaking intellectual property. This is the core tension of Shadow AI: it provides immediate local value to the individual while creating long-term systemic risk for the enterprise.

The Rise of Shadow AI in Engineering

Why do developers turn to Shadow AI? The answer is simple: friction. If the official company-approved AI tool is outdated, slow, or overly restrictive, engineers will find a way around it. They use personal accounts for ChatGPT, Claude, or Midjourney to generate code, documentation, and even architectural diagrams. This "underground" usage means that the organization loses visibility into what code is AI-generated and, more importantly, what data has been shared with third-party providers.

In our exploration of AI Automation for Daily Tasks, we emphasized that automation should be a team-wide asset. When AI is used in the shadows, the "Golden Prompts" and specialized workflows remain trapped in individual silos. This fragmentation prevents the team from building a collective intelligence that scales beyond a single developer's keyboard.

The Hidden Dangers of Ungoverned AI

The risks of Shadow AI go far beyond simple data leaks. In a production environment, reliability and security are non-negotiable. When AI-generated code is introduced without proper vetting or context, it often carries "Ghost Dependencies"—libraries, APIs, or architectural patterns that the AI "hallucinated" or that carry restrictive licenses. Here are the primary dangers we see in the field today:

PII and Secret Leaks: Developers accidentally pasting logs containing real user data or hardcoded API keys into public LLMs for debugging purposes.

Hallucinated Security: AI suggesting deprecated cryptographic methods or insecure configuration patterns that look correct but fail under a penetration test.

Licensing Contamination: AI-generated code that mimics GPL-licensed software, potentially tainting a commercial codebase and creating legal liabilities.

Lack of Reproducibility: Code that works once but is based on a non-deterministic prompt that the rest of the team cannot replicate or maintain.

From "Ban" to "Enable": The Senior Architect's Role

A Senior Architect's job is to build guardrails, not walls. History has shown that banning "Shadow IT" (and now Shadow AI) simply drives the behavior further underground. Instead, the focus should be on establishing a "Governed AI Workbench." This involves moving away from consumer-grade web interfaces and toward enterprise-grade API integrations where data is explicitly not used for training the provider's models.

Modern engineering teams are increasingly turning to local LLM deployments. By using tools like Ollama or dedicated VPC-hosted instances of Llama 3 or Mistral, you can give your team the power of AI while keeping the data 100% within your infrastructure. This approach aligns perfectly with the "Privacy-First" mindset we teach in our Advanced Node.js and .NET Architecture courses, where system boundaries are strictly enforced.

The Rule of 4: Shadow AI Scenarios

To identify if your team is currently operating in the "Shadows," look for these four distinct scenarios that signal a need for better AI governance:

The "Secret" Refactor: A developer refactors a 1,000-line legacy method over a weekend using an unapproved browser extension, introducing logic that no one else on the team understands.

The "Anonymous" Debugger: Using a public AI to analyze production stack traces that contain real customer names, emails, or transaction IDs because the local logs are "too hard to read."

The "Bypassed" Configuration: Asking an AI how to bypass a local security policy or corporate firewall rule because "it's slowing down the build process," inadvertently creating a security hole.

The "Shadow" Procurement: Entire sub-teams subscribing to third-party AI "copilots" on personal credit cards to avoid the six-month enterprise procurement cycle.

Building an AI-First Engineering Culture

The ultimate cure for Shadow AI is transparency and education. When developers understand the why behind security policies, they are far more likely to collaborate on official solutions. Providing a centralized "Prompt Library" and a "Model Switchboard" allows the organization to monitor usage, optimize costs, and ensure that the most capable model is being used for the right task.

If you want to lead this transition in your organization, you must master the underlying infrastructure that makes governed AI possible. Our Linux Mastery course provides the essential foundation for hosting your own local AI models, managing GPU resources, and securing the shell environments where professional orchestration happens.

For more information on the latest standards in AI safety, refer to the CISA AI Security Best Practices. Moving AI from the shadows into the light is not just a security requirement—it is a competitive necessity in the modern engineering landscape.