AI & Engineering / Multi-Agent Systems

Beyond the Single Brain: Why Multi-Agent Systems are the Future of AI Engineering

Stop treating AI as a single chat window. Learn how a multi-agent architecture is the only way to scale AI automation in complex codebases.

Written by

Codehouse Author

If 2025 taught us anything, it's that Large Language Models (LLMs) have a "ceiling." No matter how large the context window becomes, a single AI model eventually succumbs to Context Noise—the phenomenon where irrelevant details from a massive codebase drown out the specific logic needed to solve a problem. In 2026, the solution isn't a bigger model; it's Multi-Agent AI Systems.

At Codehouse, we’ve moved past the era of "chatting" with a single bot. Instead, we use a team of specialized agents that mimic a human engineering department. By delegating tasks between an orchestrator like Gemini CLI and execution-focused workers like the Generalist agent, we’ve effectively eliminated the "Context Tax" that plagues traditional AI workflows.

1) The "One Brain" Bottleneck

The primary limitation of a single-agent approach is that the AI must be everything at once: the architect, the coder, the tester, and the project manager. When you ask a single AI to "refactor this 50-file module," it has to hold the entire architecture and every line of code in its "working memory" simultaneously. This leads to hallucinations, missed edge cases, and generic code that ignores your project’s Clean Architecture standards.

Multi-Agent AI Systems solve this by enforcing a Separation of Concerns. Just as you wouldn't expect your Lead Architect to spend 8 hours writing boilerplate unit tests, you shouldn't expect your primary AI agent to handle low-level execution tasks while trying to maintain a high-level strategic vision.

2) The Architecture of Intelligence: Gemini CLI & Generalist

To see Multi-Agent AI Systems in action, look at our current workflow. We use Gemini CLI as the "Strategic Orchestrator." Its job is high-level: it reads the project rules, analyzes the file structure, and formulates a plan. It doesn't get "bogged down" in the minutiae of file editing unless it's a surgical change.

When a task requires high-volume work—like "Update the license headers in 100 files" or "Convert all legacy DTOs to C# 12 Records"—Gemini CLI doesn't do it directly. It invokes the Generalist agent. The Generalist is a sub-agent with a clean slate; it receives a specific, narrow instruction and a limited set of tools. This ensures that the execution is fast, precise, and free from the "mental fatigue" of the primary orchestrator.

3) The "Rule of 4" for Multi-Agent Workflows

When building or using Multi-Agent AI Systems, we follow four non-negotiable patterns to ensure production-grade reliability:

Strategic Decomposition: The orchestrator must break a large request into independent "Missions" before delegating.

Context Compression: Sub-agents should only be given the specific files and context needed for their task, preventing noise.

Parallel Execution: Specialized agents can run in parallel (e.g., one agent fixing tests while another updates documentation), significantly reducing the development lifecycle.

Verification Loops: A "Validator Agent" (often the orchestrator) must review the output of the "Worker Agent" before the changes are committed.

4) Scaling Beyond the Terminal

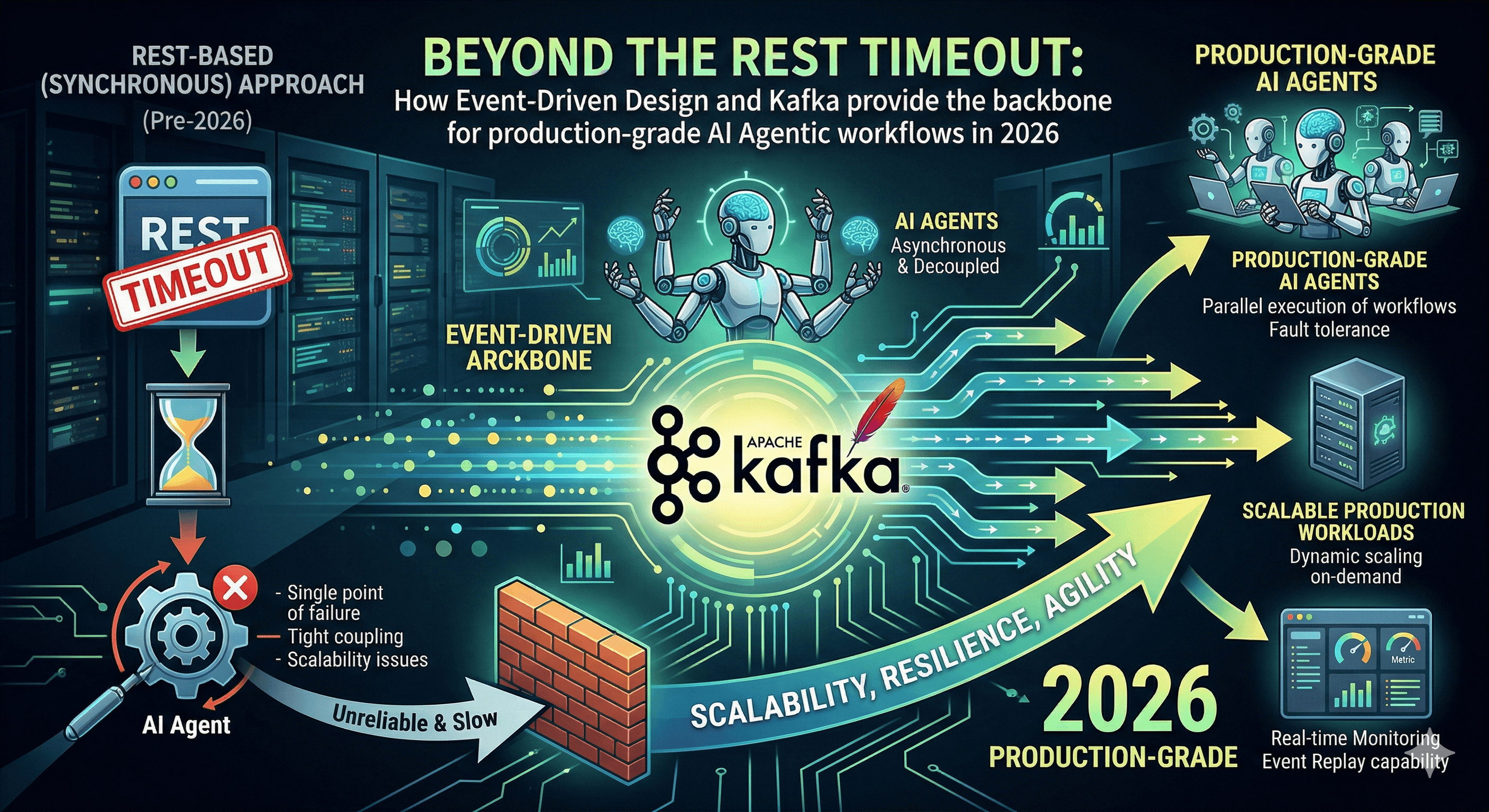

As we discussed in our post on Event-Driven Agent Orchestration, the next step is moving these multi-agent teams into the cloud. By using message brokers like Kafka, we can trigger specialized agents based on system events. Imagine an "On-Call Agent" that detects a production error, spins up a "Research Agent" to find the root cause, and a "Generalist Agent" to propose a patch—all before a human engineer even wakes up.

The future of software engineering isn't about being a better "prompter." It's about being a Systems Architect of Agents. You are no longer just writing code; you are managing a digital workforce. By mastering Multi-Agent AI Systems, you aren't just 10x more productive—you are building a scalable engine for innovation.

Stay tuned as we continue to explore the Automation of Daily Engineering Tasks through agentic intelligence.

If 2025 taught us anything, it's that Large Language Models (LLMs) have a "ceiling." No matter how large the context window becomes, a single AI model eventually succumbs to Context Noise—the phenomenon where irrelevant details from a massive codebase drown out the specific logic needed to solve a problem. In 2026, the solution isn't a bigger model; it's Multi-Agent AI Systems.

At Codehouse, we’ve moved past the era of "chatting" with a single bot. Instead, we use a team of specialized agents that mimic a human engineering department. By delegating tasks between an orchestrator like Gemini CLI and execution-focused workers like the Generalist agent, we’ve effectively eliminated the "Context Tax" that plagues traditional AI workflows.

1) The "One Brain" Bottleneck

The primary limitation of a single-agent approach is that the AI must be everything at once: the architect, the coder, the tester, and the project manager. When you ask a single AI to "refactor this 50-file module," it has to hold the entire architecture and every line of code in its "working memory" simultaneously. This leads to hallucinations, missed edge cases, and generic code that ignores your project’s Clean Architecture standards.

Multi-Agent AI Systems solve this by enforcing a Separation of Concerns. Just as you wouldn't expect your Lead Architect to spend 8 hours writing boilerplate unit tests, you shouldn't expect your primary AI agent to handle low-level execution tasks while trying to maintain a high-level strategic vision.

2) The Architecture of Intelligence: Gemini CLI & Generalist

To see Multi-Agent AI Systems in action, look at our current workflow. We use Gemini CLI as the "Strategic Orchestrator." Its job is high-level: it reads the project rules, analyzes the file structure, and formulates a plan. It doesn't get "bogged down" in the minutiae of file editing unless it's a surgical change.

When a task requires high-volume work—like "Update the license headers in 100 files" or "Convert all legacy DTOs to C# 12 Records"—Gemini CLI doesn't do it directly. It invokes the Generalist agent. The Generalist is a sub-agent with a clean slate; it receives a specific, narrow instruction and a limited set of tools. This ensures that the execution is fast, precise, and free from the "mental fatigue" of the primary orchestrator.

3) The "Rule of 4" for Multi-Agent Workflows

When building or using Multi-Agent AI Systems, we follow four non-negotiable patterns to ensure production-grade reliability:

Strategic Decomposition: The orchestrator must break a large request into independent "Missions" before delegating.

Context Compression: Sub-agents should only be given the specific files and context needed for their task, preventing noise.

Parallel Execution: Specialized agents can run in parallel (e.g., one agent fixing tests while another updates documentation), significantly reducing the development lifecycle.

Verification Loops: A "Validator Agent" (often the orchestrator) must review the output of the "Worker Agent" before the changes are committed.

4) Scaling Beyond the Terminal

As we discussed in our post on Event-Driven Agent Orchestration, the next step is moving these multi-agent teams into the cloud. By using message brokers like Kafka, we can trigger specialized agents based on system events. Imagine an "On-Call Agent" that detects a production error, spins up a "Research Agent" to find the root cause, and a "Generalist Agent" to propose a patch—all before a human engineer even wakes up.

The future of software engineering isn't about being a better "prompter." It's about being a Systems Architect of Agents. You are no longer just writing code; you are managing a digital workforce. By mastering Multi-Agent AI Systems, you aren't just 10x more productive—you are building a scalable engine for innovation.

Stay tuned as we continue to explore the Automation of Daily Engineering Tasks through agentic intelligence.