Architecture / AI Strategy

The Event-Driven Agent: Why REST is Not Enough for AI Orchestration

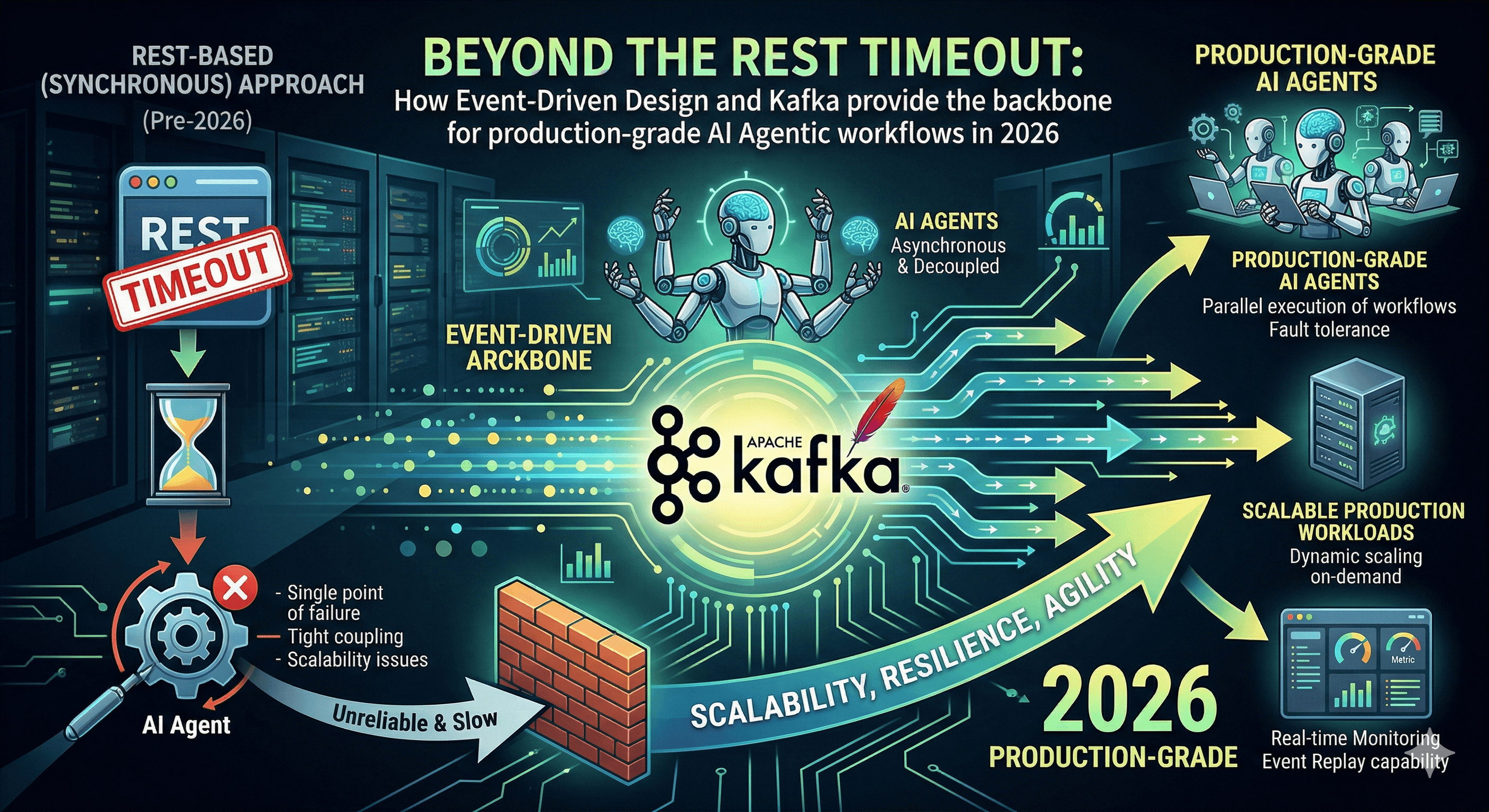

Beyond the REST Timeout: How Event-Driven Design and Kafka provide the backbone for production-grade AI Agentic workflows in 2026.

Written by

Codehouse Author

In 2024, most "AI features" were simple: a user clicks a button, a REST API calls an LLM, and the user waits 10 seconds for a response. In 2026, that model is dead. As we move toward Autonomous Agentic Workflows, where agents perform multi-step research, code generation, and verification, we can no longer rely on synchronous HTTP requests. The "Timeout" has become the greatest enemy of the AI Architect.

The solution isn't "faster models"; it's a better architecture. By applying Event-Driven Design (EDD)—specifically using Kafka, CQRS, and Event Sourcing—we can build agents that are resilient, scalable, and fully auditable. This is the transition from "Chatting with AI" to "Engineering with AI."

1) The "Thinking" Problem: Beyond the 30-Second Timeout

Professional AI agents don't just "complete a sentence." They think, they loop, and they verify. A complex refactoring agent might take 2 minutes to analyze a legacy module. In a traditional REST architecture, your connection would have dropped three times by then. By using a message broker like Kafka, we decouple the request for intelligence from the execution of that intelligence.

When a "Refactoring Command" is published to a Kafka topic, the agent picks it up as a consumer. The user doesn't wait on a spinning wheel; they subscribe to a status update stream (via SignalR or WebSockets). This isn't just a performance optimization; it's a fundamental shift in how we handle Long-Running AI Processes.

2) CQRS: The Secret to Agentic Sanity

Command Query Responsibility Segregation (CQRS) is the perfect partner for AI. Why? Because agents are notorious for "hallucinating" if they are overwhelmed with noise. In a Clean Architecture project, an agent shouldn't have to query a complex, normalized SQL database just to understand the state of the world.

Instead, we provide the agent with a Read Model optimized specifically for context. The agent "reads" from a denormalized view that contains exactly what it needs (e.g., "Current System Topology") and "writes" by issuing a Command. This separation ensures that the agent's work is isolated, predictable, and easy to validate before it ever touches your primary Data Access Layer (DALC).

3) The "Rule of 4": Scaling Agents with Event-Driven Architecture

To build a production-grade Agentic system, you need to implement these four patterns:

The Saga Orchestrator: Use an AI agent as the coordinator for a "Distributed Saga." If a microservice fails during a complex transaction, the agent identifies the failure event and triggers the appropriate compensation logic.

Event-Sourced "Black Box" Recording: By using Event Sourcing, every single thought process, tool-call, and decision made by an agent is stored as an immutable event. This provides a perfect audit trail for compliance and debugging.

Parallel Tool Execution: Instead of the agent calling one tool at a time, it publishes "Tool Requests" to a fan-out topic. Multiple worker threads execute the tasks in parallel, and the agent "joins" the results once all events are returned.

Predictive Proactive Events: Don't wait for a user to ask. Use "Anomaly Detected" events from your monitoring system to trigger an agent to perform a root-cause analysis before the user even knows there’s a bug.

As we discuss in our Advanced .NET Architecture Course, the goal of a Senior Architect is to build systems that are "Easy to Change." In the era of AI, that means building systems that are "Easy for Agents to Understand." If your codebase is a mess of synchronous dependencies, your agents will fail. If your codebase is event-driven and modular, your agents will fly.

The future of software isn't just "AI-Powered"—it's Event-Driven AI. It's time to stop waiting for the timeout and start building the backbone of the agentic revolution.

In 2024, most "AI features" were simple: a user clicks a button, a REST API calls an LLM, and the user waits 10 seconds for a response. In 2026, that model is dead. As we move toward Autonomous Agentic Workflows, where agents perform multi-step research, code generation, and verification, we can no longer rely on synchronous HTTP requests. The "Timeout" has become the greatest enemy of the AI Architect.

The solution isn't "faster models"; it's a better architecture. By applying Event-Driven Design (EDD)—specifically using Kafka, CQRS, and Event Sourcing—we can build agents that are resilient, scalable, and fully auditable. This is the transition from "Chatting with AI" to "Engineering with AI."

1) The "Thinking" Problem: Beyond the 30-Second Timeout

Professional AI agents don't just "complete a sentence." They think, they loop, and they verify. A complex refactoring agent might take 2 minutes to analyze a legacy module. In a traditional REST architecture, your connection would have dropped three times by then. By using a message broker like Kafka, we decouple the request for intelligence from the execution of that intelligence.

When a "Refactoring Command" is published to a Kafka topic, the agent picks it up as a consumer. The user doesn't wait on a spinning wheel; they subscribe to a status update stream (via SignalR or WebSockets). This isn't just a performance optimization; it's a fundamental shift in how we handle Long-Running AI Processes.

2) CQRS: The Secret to Agentic Sanity

Command Query Responsibility Segregation (CQRS) is the perfect partner for AI. Why? Because agents are notorious for "hallucinating" if they are overwhelmed with noise. In a Clean Architecture project, an agent shouldn't have to query a complex, normalized SQL database just to understand the state of the world.

Instead, we provide the agent with a Read Model optimized specifically for context. The agent "reads" from a denormalized view that contains exactly what it needs (e.g., "Current System Topology") and "writes" by issuing a Command. This separation ensures that the agent's work is isolated, predictable, and easy to validate before it ever touches your primary Data Access Layer (DALC).

3) The "Rule of 4": Scaling Agents with Event-Driven Architecture

To build a production-grade Agentic system, you need to implement these four patterns:

The Saga Orchestrator: Use an AI agent as the coordinator for a "Distributed Saga." If a microservice fails during a complex transaction, the agent identifies the failure event and triggers the appropriate compensation logic.

Event-Sourced "Black Box" Recording: By using Event Sourcing, every single thought process, tool-call, and decision made by an agent is stored as an immutable event. This provides a perfect audit trail for compliance and debugging.

Parallel Tool Execution: Instead of the agent calling one tool at a time, it publishes "Tool Requests" to a fan-out topic. Multiple worker threads execute the tasks in parallel, and the agent "joins" the results once all events are returned.

Predictive Proactive Events: Don't wait for a user to ask. Use "Anomaly Detected" events from your monitoring system to trigger an agent to perform a root-cause analysis before the user even knows there’s a bug.

As we discuss in our Advanced .NET Architecture Course, the goal of a Senior Architect is to build systems that are "Easy to Change." In the era of AI, that means building systems that are "Easy for Agents to Understand." If your codebase is a mess of synchronous dependencies, your agents will fail. If your codebase is event-driven and modular, your agents will fly.

The future of software isn't just "AI-Powered"—it's Event-Driven AI. It's time to stop waiting for the timeout and start building the backbone of the agentic revolution.